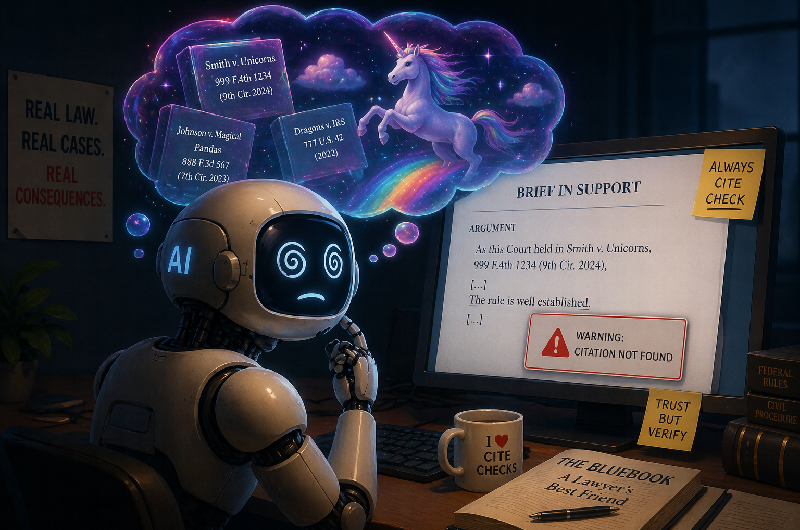

A major law firm is implementing safeguards to prevent artificial intelligence from fabricating case citations in court filings. The firm recognized that AI language models generate plausible-sounding but entirely fictional legal precedents, creating serious risks for attorney credibility and case outcomes.

The problem stems from how AI systems work. These tools predict probable next words based on training data, sometimes producing invented case names, docket numbers, and judicial holdings that sound authentic but never existed. When attorneys submit filings containing fabricated authority, courts reject the arguments and discipline may follow.

The firm's solution involves mandatory review protocols requiring lawyers to verify all case citations before submission. Staff members must confirm each citation exists and accurately reflects the holding cited. The firm also restricts which AI tools attorneys can use for legal research and prohibits relying on AI-generated case law without independent verification.

This response reflects broader industry concern. Courts have already sanctioned attorneys for submitting AI-hallucinated cases. The New York State Bar issued ethics guidance warning lawyers they remain responsible for filing accuracy regardless of how they draft documents. Bar associations across jurisdictions have begun addressing the issue through ethics opinions.

The firm's approach signals that even sophisticated legal organizations cannot simply trust AI outputs. Human verification remains essential.